Originally Published by Daniel Whitenack – Republished by author approval

Photo by Amador Loureiro on Unsplash

In my main position, as a data scientist at SIL International, I work on expanding language possibilities with AI. Practically this includes applying recent advances in Natural Language Processing (NLP) to low resource and multilingual contexts. We work on things like spoken language identification, multilingual dialogue systems, machine translation, and translation quality estimation. You can find out more about these efforts at ai.sil.org.

Because our focus in these efforts is on applications to local languages, our team is composed of technologists, linguists, and translators from all over the world. This team is growing, and it recently became very clear that we needed a standardization and coherent management practices around how we use data, run experiments, and consume compute resources (like GPUs).

When I started at SIL, I was the only data scientist in the whole organization, and now our growing AI and NLP teams include 10+ data scientists or computational linguists. We are also collaborating with a handful of universities and industry partners to advance projects. All of this is great, but it also means that we have a variety of individuals (inside and outside of SIL) in a variety of geographic locations performing R&D with a diverse set of data sets and computational resources.

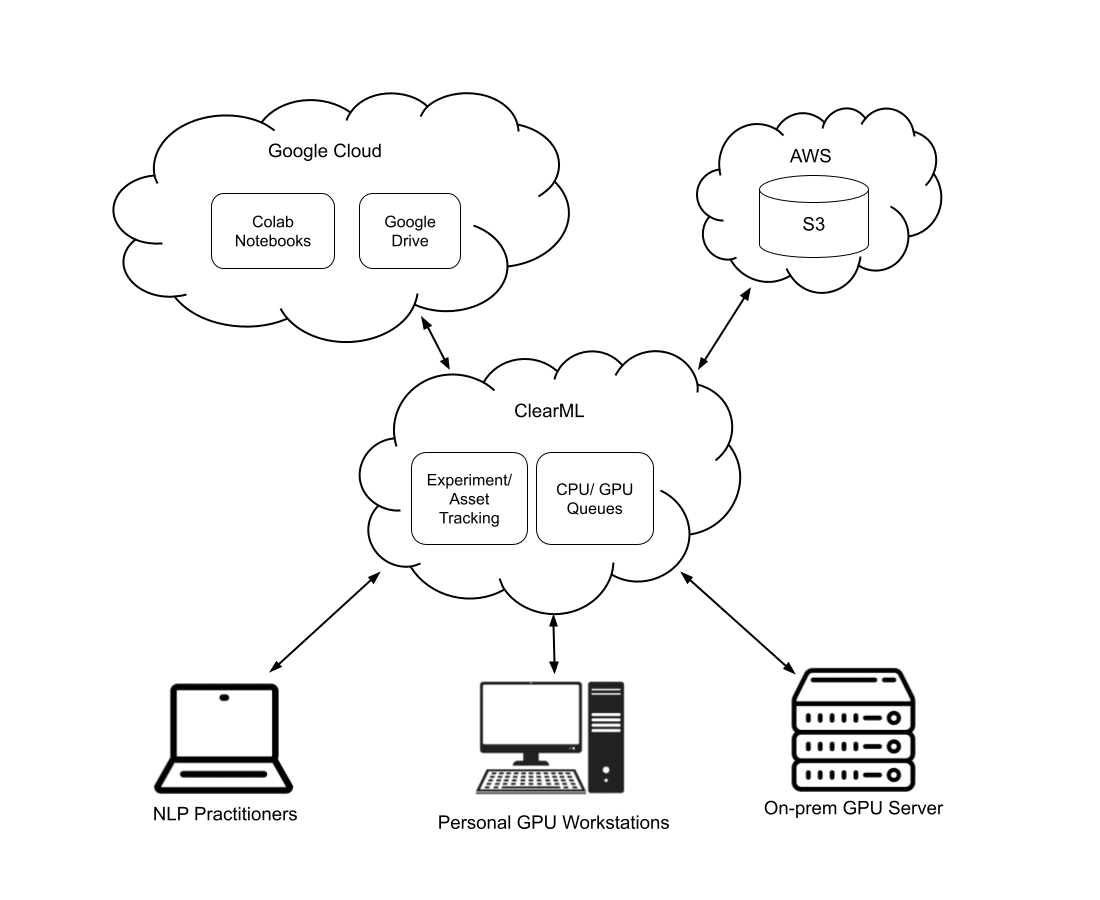

In order to coordinate this set of practitioners and make sure we are creating collective impact, we started investigating shared compute/data resources and MLOps systems. We have now settled into a nice set of workflows where practitioners are able to use the following resources “glued” together by ClearML MLOps:

- Google Colab – Our practitioners (just like all data scientists and researchers) love notebooks. SIL uses GSuite for most docs and shared data, so we also use a lot of Google Colab.

- Private GPU resources – Various individuals who have been working on NLP-related things for some time in SIL have purchased their own GPU workstations.

- Shared GPU resources – Recently, we purchased a shared 4X A100 GPU server which is shared between our internal teams and external partners.

- AWS S3 – We store data sets, model configurations, reference data sets, and model binaries in AWS S3 (along with other S3 API compatible object stores like Digital Ocean Spaces)

- Google Drive – As mentioned, we are heavy users of Google GSuite, and thus we occasionally need to pull some data or assets from Drive.

By utilizing ClearML, all of our researchers can use the tools and resources that they already love, but we can also do the following:

- Centrally and consistently track experimental input/output, data lineage, model provenance, etc. using ClearML’s MLOps capabilities

- Manage shared GPU queues on our “big” GPU server in the same way we manage private GPU queues on workstations

- Share experiments and code between collaborators

Thus, our NLP R&D platform is now looking like what is shown in the above diagram. Regardless of where our team members need/want to run their experiments (Colab, personal GPUs, or shared GPUs), they can import the ClearML python client, which will register their experiment in our centralized ClearML deployment. All of our team can then explore and compare experiments running across our diverse infrastructure in the ClearML web app.

Data sets from Google Drive and S3 can be pre-processed and registered as ClearML data sets. Each of these data sets will have a unique identifier that can tie that data set to various experiments.

At the end of the day, structuring our research and development in this way is enabling new kinds of workflows. For example, one person working in Asia can prototype some model training in Google Colab and register that along with a corresponding data set in ClearML. Then another person working in Europe can “clone” that experiment, plug in a different data set, modify the hyperparameters, and queue up a new experiment (based on the first) to run on our shared GPU server in Dallas, TX, USA.

We are super excited to see what this kind of tracked collaboration will enable for SIL’s AI work in the future. If you are interested in trying out ClearML for experiment tracking.. Guess what? It’s open source, and you just need to import the library. Find out more here.